RAM and CPU improvements in pcsc-lite 1.6.x

Between pcsc-lite 1.5.x and pcsc-lite 1.6.x I improved many aspects regarding resources consumption: CPU and memory.

System used

For the measures I used an embedded Linux on an ARM9 CPU. This is a typical embedded system. The relative gains are comparable on a desktop PC even if the numbers are different.

The CCID driver is the standard one with support of 159 readers. Only this driver is installed.

Note that pcscd is compiled to use libusb instead of the default libhal. The targeted system does not have libhal available. So the libusb-1.0 code is shared between pcscd and libccid.

CPU consumption

Start up time

From 13193049 µs (13 s) to 258262 µs (0.3 s) ⇒ factor x51 or 5008%

pcsc-lite 1.5.x (and previous versions) used a complexity exponential with the number of readers supported in a

Info.plist file.pcsc-lite 1.6.x now has a complexity linear with the number of readers supported in a

Info.plist file.No more polling

In previous versions the sources of polling were:

-

SCardGetStatusChange

This function is used by an application to detect card events or readers events. In the previous implementation the client librarylibpcscliteregularly asked the daemon the list of connected readers and card status.

- Reader hotplug

If libusb is used by pcsc-lite the USB bus is scanned every 1 second. It is no more the case with libhal.

- Card events

The card status is asked by pcscd to the card driver every 200ms. If the driver supportsTAG_IFD_POLLING_THREADthen it is no more the case. My CCID driver supports this feature.

In version 1.6.x no polling is done by pcsc-lite. So if nothing happens with the reader(s) then the CPU activity of pcsc-lite is 0%.

RAM consumption of pcscd

Global

Numbers are from the VSZ field from ps(1)

With no reader connected:

From 1516 kB to 976 kB ⇒ gain 36% or 540 kB

With one reader connected:

From 1688 kB to 1180 kB ⇒ gain 30% or 508 kB

text

From 76 kB to 88 kB ⇒ loss 15%

The code has grow. Mainly because of the use of a list management library.

heap

With no reader connected:

From 624 kB to 84 kB ⇒ gain 86% or 540 kB

With one reader connected:

From 652 kB to 132 kB ⇒; gain 80% or 520 kB

RAM consumption with optimization for embedded systems

As described in a previous article "pcsc-lite for limited (embedded) systems" it is possible to limit the RAM consumption by disabling features.

--enable-embedded

Both pcsc-lite and libccid supports the

--enable-embedded flag.Global consumption with one reader connected and compared to version 1.6.x:

From 1180 kB to 1152 kB ⇒ gain 2% or 28 kB

--enable-embedded and --disable-serial

Compared to the previous case:

From 1152 kB to 1144 kB ⇒ gain 1% or 8 kB

Compared to the normal case:

From 1180 kB to 1144 kB ⇒ gain 3% or 36 kB

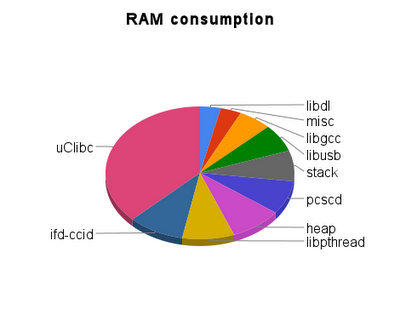

RAM consumption repartition

The graphic bellow gives the repartition (sorted by size) of RAM used by the different parts of a running pcscd with a CCID reader connected.

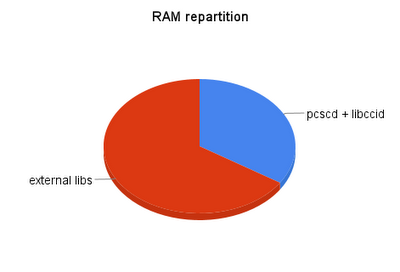

If I separate the different libraries used from pcscd + libpcsclite + heap + stack we have:

The code I have an impact on (pcsc-lite and libccid) is only 34% of the total RAM consumed. So any further improvement will be rather limited.

But the libraries uClibc, libpthread, libgcc and libdl may also be used by other applications and be present only once in memory and shared. So the real RAM consumption is more complex than shown in the graphics.

RAM consumption of libpcsclite.so

Measures are done using the

testpcsc sample program.libpcsclite text

Without

--enable-embedded:From 36 kB to 32 kB ⇒ gain 11% or 4 kB

With

--enable-embedded:From 36 kB to 24 kB ⇒ gain 33% or 12 kB

libpcsclite data

The communication between pcscd and libpcsclite do not use a shared memory segment any more. So the

pcscd.pub RAM mapped file is no more used and 64 kB of RAM are saved.Conclusion

The start up speed has greatly been improved. This is even more important now that we can start

pcscd automatically when needed from libpcsclite.The use of lists instead of static arrays has decreased the RAM consumed in normal cases and allowed to use much more contexts.